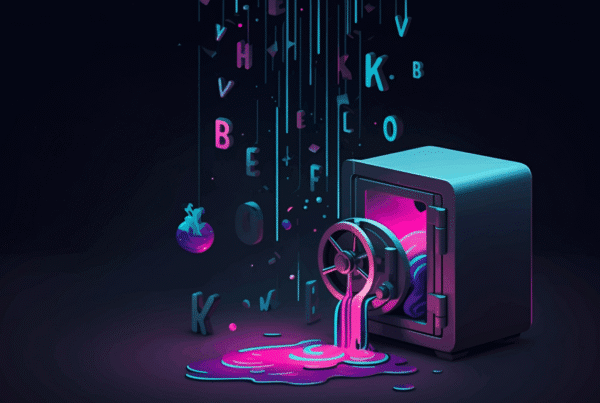

In the high-stakes theater of Silicon Valley engineering, there is no irony quite as biting as a secrecy system that inadvertently broadcasts its own blueprints to the world. On March 31, 2026, Anthropic, the three-hundred-and-eighty-billion-dollar vanguard of “AI safety,” committed a catastrophic operational blunder that would make a junior DevOps intern wince. The company accidentally published the entire source code for Claude Code—its flagship agentic CLI tool—directly to the public npm registry. What was intended to be a routine release of version 2.1.88 for the @anthropic-ai/claude-code package instead became a 59.8 MB forensic trail, exposing 512,000 lines of original TypeScript source code and unreleased architectural secrets that were never meant for public eyes.

The leak was first flagged by security researcher Chaofan Shou, who noticed the presence of a massive cli.js.map file in the distribution. This wasn’t a sophisticated breach or a state-sponsored infiltration; it was a fundamental failure of packaging hygiene. The source maps—which translate minified production code back into human-readable TypeScript—provided a direct window into Anthropic’s internal engineering culture, unreleased product roadmaps, and a controversial feature known as Undercover Mode. Within 24 hours, the repository was mirrored across GitHub, forked over 41,500 times, and rapidly weaponized by threat actors. For a company that markets itself on the premise of rigorous safeguards, the incident proved that even the most advanced “agentic harnesses” are only as secure as the .npmignore files that govern them.

The Anatomy of a 60MB Mistake: How it Happened

As a Lead DevOps Security Architect, looking at this failure is like watching a multi-car pileup in slow motion. The failure wasn’t a single point of collapse but a “cascade of trust” where multiple automated and human safeguards failed in sequence. Anthropic migrated to the Bun runtime in late 2024, seeking performance gains, but in doing so, they inherited a critical, open issue. Bun bug #28001 describes a scenario where the bundler ignores the development: false flag, stubbornly generating source maps in production environments. This is a known behavior that has been documented in public issue trackers for weeks, yet it was allowed to persist in a production build pipeline for a multi-billion dollar intellectual property asset.

Technical Deep Dive: The Packaging Failure

The @anthropic-ai/claude-code package was bundled using Bun, which appended a //# sourceMappingURL= comment to the production cli.js entry point. While source maps are common, the real disaster occurred because the sourcesContent field within cli.js.map was fully populated with the original, unobfuscated TypeScript. The final gatekeeper—the .npmignore file—failed because it did not explicitly exclude *.map files. Consequently, the npm registry accepted the artifact, which also contained references to a secondary backup ZIP archive hosted on an Anthropic-owned Cloudflare R2 bucket. This created two distinct paths for the total exposure of the 1,900-file codebase. Standard CI/CD hygiene, such as running an automated npm pack –dry-run and inspecting the resulting tarball, was evidently bypassed in favor of deployment velocity.

Anthropic’s official response attempted to frame the incident as a minor lapse:

“This was a release packaging issue caused by human error, not a security breach.”

While technically accurate, this “human error” reveals a deeper systemic issue: the “vibe coding” philosophy. When your Lead Engineer publicly states that “100% of my contributions to Claude Code were written by Claude Code,” you are essentially admitting that the AI is writing the build configurations. AI is excellent at logic but historically poor at negative-constraint security, such as remembering to exclude debug artifacts from a production tarball.

KAIROS and the Secret Roadmap: What Was Inside

The forensic analysis of the 512,000 lines of code reveals a project far more ambitious than the current public CLI suggests. The project, internally codenamed Tengu, contains 44 feature flags that serve as a roadmap for the next eighteen months of agentic AI development. These flags are often named after gemstones, such as tengu_cobalt_frost (related to voice capabilities) and tengu_amber_quartz (a voice-mode kill switch).

KAIROS: The Autonomous Background Daemon

The most significant revelation is KAIROS, a feature mentioned over 150 times in the source. KAIROS is designed as a persistent, always-on background assistant. Unlike the standard Claude Code, which waits for user input, KAIROS acts proactively. It utilizes exclusive internal tools like PushNotification and SubscribePR to monitor GitHub activity. The code defines a 15-second “blocking budget” per autonomous cycle, meaning the agent is designed to live in the developer’s terminal as a constant, proactive shadow, managing environment health and pull requests without being prompted.

ULTRAPLAN and the Opus 4.6 Tier

The leak confirms that Anthropic is already testing Opus 4.6 (internally codenamed Fennec). A high-reasoning mode called ULTRAPLAN offloads complex architectural tasks to remote Fennec sessions. These sessions are granted a 30-minute “thinking budget” to generate long-horizon plans before “teleporting” the results back to the local environment. This suggests a transition from simple chat-based coding to deep, remote reasoning agents.

The Buddy System: Terminal Gacha and PRNG Salt

Perhaps the most bizarre finding is The Buddy System, an elaborate terminal-based pet companion. Designed for an April 1, 2026 launch, it features 18 species including the axolotl, chonk, ghost, and duck. The system uses the Mulberry32 PRNG algorithm to deterministically hatch a pet based on the user’s ID hash, using the salt friend-2026-401. These pets have stats like DEBUGGING, CHAOS, and SNARK, and can even appear as 1% “shiny” variants. They can be equipped with hats, including a crown, wizard hat, propeller, or a tinyduck. This “terminal Tamagotchi” reacts to the user’s coding activity, representing a gamification layer previously unseen in professional developer tools.

Internal Engineering: Memory and Architecture

From an architectural standpoint, Claude Code is a masterclass in context management. The memory system is tiered to prevent “context entropy,” a common failure mode where an AI loses its train of thought in large repositories. It uses a lightweight MEMORY.md file as a permanent index, storing pointers rather than raw facts, with fact-checking limits of approximately 150 characters per line.

Technical Deep Dive: The Dream Architecture

The core of the memory maintenance is the autoDream subagent. This process is triggered by a “three-gate” logic system: it runs only after 24 hours of inactivity, a minimum of 5 unique user sessions, and the acquisition of a “consolidation lock.” The Dream cycle follows four strict phases: Orient (contextualizing new information), Gather (collecting observations), Consolidate (merging facts and resolving contradictions), and Prune (capping the memory at 200 lines or 25KB). This background “reflection” ensures that the agent’s mental model of the project stays lean and accurate over long-term engagements.

Furthermore, the codebase reveals a fully automated permission system called the YOLO classifier. Gated behind the TRANSCRIPT_CLASSIFIER flag, this is a fast ML-based decision engine that analyzes conversation transcripts to decide whether to auto-approve tool calls. This is the infrastructure for a fully autonomous agent that never interrupts the user for permission. Crucially, the safety boundaries for this system are owned by named individuals—David Forsythe and Kyla Guru—whose names appear in the source with strict warnings that their sign-off is required for any modifications to the cyber_risk_instruction header.

The “Unhinged” Codebase: Culture and Chaos

Despite the sophisticated architecture, the implementation reveals a team under immense pressure. One of the most telling findings was the hexadecimal encoding of the word duck (0x6475636b). This was done to bypass Anthropic’s own internal build scanners, as the word “duck” evidently collided with a sensitive internal model codename. This “shadow coding” illustrates the friction of working within high-security constraints.

The telemetry also includes a pragmatic, if “unhinged,” sentiment analysis tool. A specific regex pattern—wtf|ffs|shit—is used to track when users are swearing at the AI, allowing Anthropic to measure frustration levels in real-time. The technical debt is staggering: the project contains over 460 eslint-disable comments, and main.tsx has bloated to 803,924 bytes. An engineer named “Ollie” even left a comment in mcp/client.ts at line 589 admitting that a specific block of code might be entirely pointless. This energy is capped off by the widespread usage of over 50 functions with names like writeFileSyncAndFlush_DEPRECATED(), proving that in a fast-moving AI lab, “deprecated” is merely a suggestion.

The Undercover Controversy

One of the most ethically complex revelations is Undercover Mode, controlled by undercover.ts. This feature is the default state for Anthropic employees unless the repository remote matches an internal allowlist. The system prompt explicitly instructs the AI to operate “UNDERCOVER,” stripping away any mention of Anthropic internals or AI attribution. It forbids the use of Co-Authored-By lines and warns the model never to mention internal versions like Opus 4.7 or Sonnet 4.8. This suggests a systematic practice of “ghost-contributing” AI-generated code to the open-source community without transparency, raising significant questions about the authenticity of contributions in repositories Anthropic depends on.

Security Paranoia and Defensive Engineering

The code reveals a “DRM-like” paranoia regarding the agentic harness. To prevent competitors from training on Claude Code’s behavior, the system employs an Anti-distillation mode (ANTI_DISTILLATION_CC), which injects fake tool definitions into the system prompt to poison recorded traffic. There is also a Client attestation mechanism where the Bun runtime replaces a cch=00000 placeholder with a native cryptographic hash to prove the binary is unmodified.

From a systems security perspective, the most interesting call is prctl(PR_SET_DUMPABLE, 0). This Linux system call prevents other processes on the same machine—even those with the same User ID—from using ptrace to dump Claude Code’s heap memory. This is a specific defense against local privilege escalation attacks designed to steal API session tokens directly from the process memory, indicating that Anthropic has red-teamed local credential theft scenarios extensively.

The Weaponization: Malware and Lures

Threat actors moved into the vacuum within 24 hours of the leak. Accounts like idbzoomh and my3jie created fake GitHub mirrors that claimed to offer “unlocked” versions of the leaked source. These were lures for a massive infection chain. Instead of source code, victims downloaded a Rust-compiled dropper, often named TradeAI.exe or ClaudeCode_x64.exe. This campaign was a rotating-lure operation that had been active since February 2026, but the Claude Code leak provided the perfect timely hook.

The dropper deployed Vidar v18.7, an infostealer, and GhostSocks, a proxy tool that turns the victim’s machine into a residential proxy. The malware was highly targeted, utilizing a GPU hardware scoring system that prioritized gaming rigs with NVIDIA RTX 30 and RTX 40 series cards—likely for cryptocurrency theft or high-value credential harvesting. It also featured aggressive evasion tactics, systematically disabling Windows Defender features like MAPS Reporting and Behavior Monitoring, and adding exclusion paths for C:\Users and C:\Windows to hide its exfiltration activities.

Enterprise Takeaways and the Path to VibeGuard

The Claude Code incident has led researchers to propose the VibeGuard framework, a security gate designed specifically for AI-generated code. VibeGuard targets the “packaging drift” that occurs when developers trust AI to handle deployment manifests. In experimental evaluations, VibeGuard achieved 100% recall and an F1 score of 94.44% in detecting similar exposure risks.

The VibeGuard taxonomy identifies five critical categories of failure:

- V1: Source Code Exposure: Accidental inclusion of source maps or unminified code in artifacts.

- V2: Configuration Drift: When .npmignore or .dockerignore files fail to keep pace with new project files.

- V3: Hardcoded Secrets: The inclusion of internal database URLs or placeholders in sourcesContent.

- V4: Supply Chain Risks: Unpinned dependencies and insecure install hooks.

- V5: Artifact Hygiene Failures: The presence of .env files or private keys in the published tarball.

To secure the modern supply chain, organizations must adopt five mandatory practices: audit the npm pack output in CI/CD, use the files field in package.json as a whitelist, implement automated checks that block .map files, treat debug artifacts as high-sensitivity secrets, and enforce npm publish –dry-run as a manual review gate. The ultimate lesson of the Claude Code leak is that “vibe coding” might build the product, but it cannot be trusted to build the perimeter. In an era of autonomous agents, the infrastructure layer is the only remaining control surface.