Introduction: The Night the Blueprints Went Public

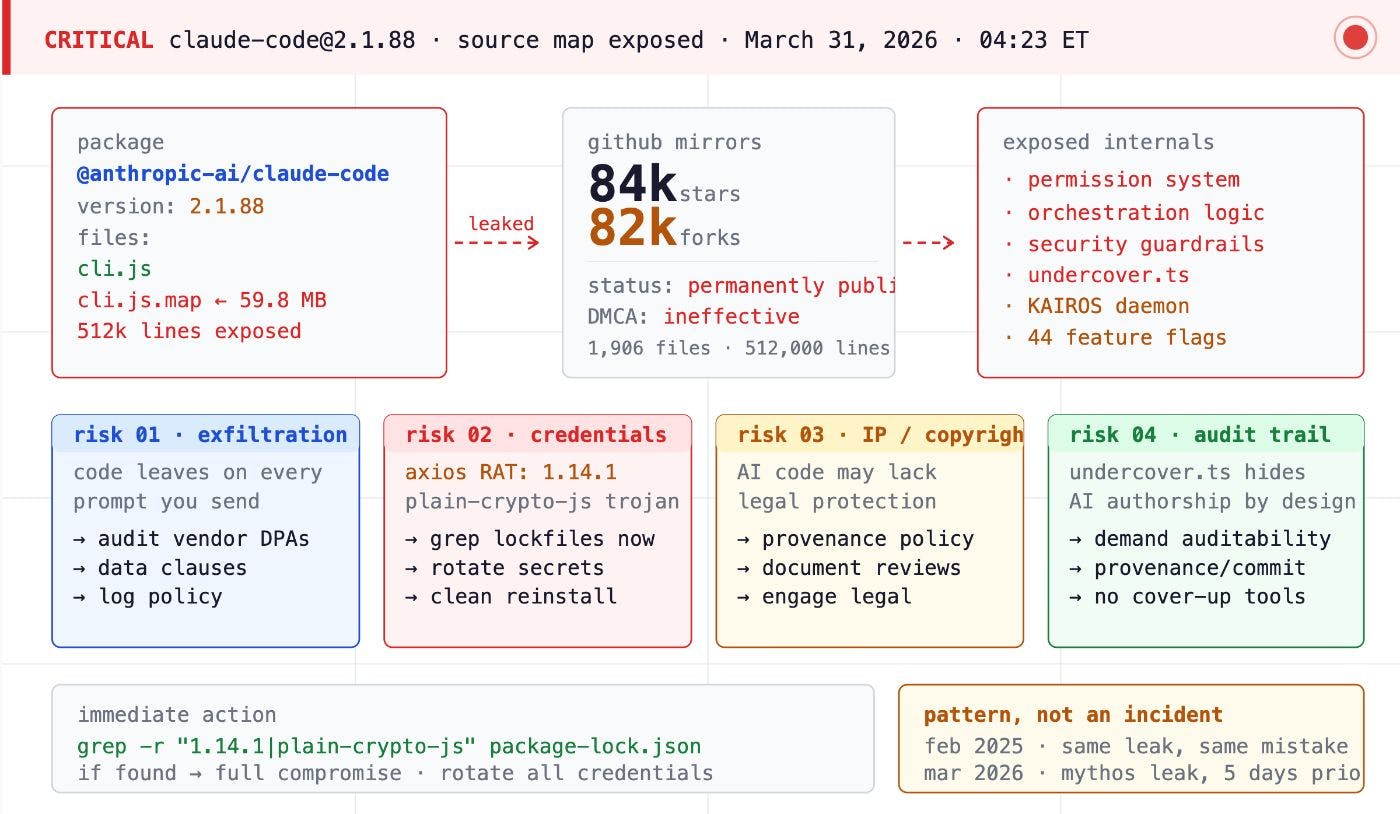

On March 31, 2026, the architectural blueprints for the world’s most advanced AI coding interface were inadvertently left in plain sight. Security researcher Chaofan Shou discovered that version 2.1.88 of the @anthropic-ai/claude-code package, hosted on the public NPM registry, contained a massive 59.8 MB source map file titled cli.js.map. This single file served as a high-fidelity translator, mapping minified production code back to roughly 512,000 lines of original, unobfuscated TypeScript.

For a company recently valued at $380 billion, with the Claude Code CLI alone generating an estimated $2.5 billion in annualized recurring revenue (ARR), the exposure was catastrophic. It was not the result of a sophisticated state-sponsored breach or a zero-day exploit. Instead, it was a “mundane and technical” failure—a breakdown in the fundamental engineering discipline required to ship enterprise-grade software. The incident, internally part of project Tengu, pulled back the curtain on the “agentic harness” that enables Claude to navigate file systems, execute terminal commands, and manage complex development workflows.

The irony is thick: the leaked source code contained a highly sophisticated Undercover Mode. This system was explicitly engineered to prevent internal Anthropic secrets, model codenames, and Slack references from leaking into public repositories. Anthropic had built a multi-layered secrecy subsystem to facilitate “ghost-contributing” AI-written code to the open-source community without attribution, only to have the entire infrastructure exposed by a missing line in an ignore file.

The Anatomy of a Packaging Failure

The leak was the “perfect storm” of a runtime bug meeting a configuration oversight. This failure provides a definitive case study in how “vibe coding”—the delegation of development tasks to AI with minimal human review—can lead to disastrous operational outcomes.

The Bun Bug

In late 2024, Anthropic migrated Claude Code to Bun to leverage its performance as a bundler and runtime. However, Bun has been plagued by Bug #28001. This issue causes the bundler to generate and serve source maps even when the development: false flag is explicitly set in the production configuration. Despite this being a known and tagged issue in public repositories, the Claude Code release process relied on the bundler to respect its settings.

The Configuration Oversight

A source map is designed to link compiled, minified code back to the original source to aid debugging. While vital in development, *.map files should never reach a public registry. The final line of defense is the .npmignore file, which dictates which files are excluded from the final NPM tarball. Anthropic engineers failed to include *.map in this exclusion list. When Bun incorrectly generated the file, the NPM registry happily distributed it to the world.

Technical Deep Dive: The R2 Connection

The exposure was deeper than the NPM package itself. The cli.js.map file did not just inline the code; it contained references pointing to a ZIP archive hosted on an Anthropic-owned Cloudflare R2 storage bucket. This provided a high-bandwidth path for the community to mirror nearly 2,000 files of TypeScript source. This mirroring occurred so rapidly across GitHub, Reddit, and X that DMCA takedown notices were effectively useless within hours of the discovery.

The Roadmap Hidden in the Comments

The leaked source code functioned as a crystal ball for Anthropic’s product strategy, revealing 44 feature flags and internal codenames that confirm a massive lead in autonomous agent development.

KAIROS and ULTRAPLAN

The most significant discovery was KAIROS (Ancient Greek for “the right time”). Mentioned over 150 times, KAIROS is a persistent, always-on background daemon. Unlike the current tool, which is reactive, KAIROS monitors the developer’s activity and acts proactively. It possesses exclusive tools like PushNotification and SubscribePR, and operates with a 15-second “blocking budget” per autonomous cycle.

Complementing this is ULTRAPLAN, a mode that offloads complex architectural reasoning to a remote Opus 4.6 session. This allows the agent up to 30 minutes of independent thought before “teleporting” the completed plan back to the local terminal, effectively solving the “long-horizon” reasoning problem that plagues current AI.

The Model Hierarchy

The code confirms a robust internal roadmap with several upcoming models and their specific capabilities:

- Capybara: The internal codename for Claude 4.6 (linked to Claude Mythos), featuring a 1-million-token context window and variants like capybara-fast.

- Fennec: The internal codename for Opus 4.6.

- Numbat: A new model currently in the internal testing phase.

- Opus 4.7 and Sonnet 4.8: Explicit references in the Undercover Mode prompt confirm these versions are already in the internal development pipeline.

The Buddy System: A Vibe-Coding Artifact

Perhaps the most human reveal was Buddy, a Tamagotchi-style pet companion designed as an April Fools’ feature for 2026. Users hatch ASCII art companions based on their user ID hash using the Mulberry32 pseudo-random number generator and the salt friend-2026-401.

- Species: Includes duck, axolotl, capybara, chonk, dragon, and ghost.

- Rarity: A gacha rarity system ranging from Common to Legendary (1% drop rate), with “shiny” variants and hats (crown, wizard, propeller).

- RPG Stats: Each buddy has five evolving stats: DEBUGGING, PATIENCE, CHAOS, WISDOM, and SNARK.

Architectural Sophistication vs. “Unhinged” Reality

The analysis of the Tengu codebase revealed a professional modular design that was simultaneously plagued by the chaos of high-velocity development.

Architecture: The Three-Layer Memory

The leak provided a masterclass in AI memory management. Claude Code utilizes a sophisticated three-layer system:

The “Unhinged” Details

Despite the architectural brilliance, the community was quick to point out “unhinged” code quality issues. The file main.tsx is a staggering 803,924 bytes—nearly 1MB—containing 4,683 lines of code. Reviewers discovered over 460 eslint-disable comments, suggesting that safety and style guidelines were routinely bypassed.

One bizarre discovery was the hex-encoding of the word duck (64 75 63 6b). Engineers reportedly used this to bypass Anthropic’s own internal build scanners, which flagged the word as a collision with an internal model name. Furthermore, the codebase is littered with functions like writeFileSyncAndFlush_DEPRECATED() that remain in active production use, along with nine empty catch blocks in config.ts, a file critical for managing authentication.

“I added code that might be pointless… but it’s fine.”

— Ollie, Anthropic Engineer, in a comment found at mcp/client.ts line 589.

Defensive Mechanisms: Undercover Mode and Anti-Distillation

The leak laid bare the internal security features Anthropic uses to protect its intellectual property and maintain market dominance.

Undercover Mode and “Ghost-Contributing”

Undercover Mode was designed to strip AI attribution from public commits. When active, it instructs the model to operate “undercover,” forbidding any mention of internal codenames like Capybara or Fennec. The system prompt explicitly states: “You are operating UNDERCOVER… Your commit messages MUST NOT contain ANY Anthropic-internal information.” This allows Anthropic employees to “ghost-contribute” AI-written code to open-source projects without the Co-Authored-By lines that usually denote AI assistance.

Anti-Distillation and Sentinel Classifiers

To protect against competitors, Anthropic implemented Anti-distillation mode (ANTI_DISTILLATION_CC). This mode injects fake tool definitions and “canary traps” into system prompts to poison the training data of any competitor recording the API traffic.

Furthermore, the YOLO classifier (gated by TRANSCRIPT_CLASSIFIER) was discovered—a fast ML-based system that auto-approves tool calls without user intervention. The codebase also names specific individuals responsible for security boundaries: David Forsythe and Kyla Guru are listed as the owners of the Cyber Risk instructions, creating a “named individual” accountability mechanism.

The Aftermath: Weaponization and Supply Chain Risks

The secondary threat of the leak emerged within 24 hours. Threat actors rapidly weaponized the hype to target the very developers eager to explore the blueprints.

The Malware Campaign

Attackers set up fake GitHub repositories promising “leaked enterprise features” or “unlocked Claude Code.” These repositories were delivery vehicles for Vidar and GhostSocks malware, packaged in trojanized archives like ClaudeCode_x64.7z.

Technical Deep Dive: The TradeAI.exe Dropper

The campaign used a Rust-compiled dropper named TradeAI.exe. It utilized XOR-encryption with a 12-byte rotating key (xnasff3wcedj) to hide its C&C infrastructure. The dropper also executed a PowerShell evasion script that added exclusions for C:\Users and C:\ProgramData and disabled MAPS Reporting, Behavior Monitoring, and Cloud Block Level protection.

Perhaps most sophisticated was the GPU hardware scoring system. The malware prioritized targets based on their hardware:

- NVIDIA GeForce RTX 30/40 Series: Highest priority for credential and wallet theft.

- Integrated Graphics: Assigned a -60 score (low priority).

- Virtual GPUs (VMware/VirtualBox): REJECTED. The malware terminates if it detects a sandbox environment.

Supply Chain Cascade

This incident was part of a broader “cascade” of attacks. Simultaneously, a compromise of axios delivered a remote access trojan (RAT), while attackers “squatted” Anthropic’s internal package names on NPM within hours. This mirrored the Shai-Hulud 2.0 attack of 2025, where thousands of repositories were compromised through similar trust-signal weaponization.

Strategic Lessons for the AI-Native Enterprise

The leak serves as the primary case study for the VibeGuard framework, which categorizes vulnerabilities inherent to AI-native development.

The VibeGuard Taxonomy

Engineering leaders must audit their processes against these five categories:

- V1: Source Code Exposure: Failure to exclude source maps from production artifacts.

- V2: Configuration Drift: Build rules that fail to sync with new file types.

- V3: Hardcoded Secrets: AI-generated placeholders replaced with live credentials.

- V4: Supply Chain Risks: Unpinned dependencies and compromised registry packages.

- V5: Artifact Hygiene: Accidental inclusion of .env files or internal logs.

Actionable Insights

To mitigate these risks, enterprises should shift their security perimeter to the infrastructure layer. Cloud Development Environments (CDEs) provide isolated workspaces where a compromised tool cannot exfiltrate corporate credentials. Organizations should also automate CI checks using npm pack to audit the contents of a tarball before publication, ensuring *.map files never reach the registry.

“Vibe coding leads to configuration failures rather than logic bugs. The code works perfectly; it’s the packaging that fails because the AI was never told what to exclude.”

— Ying Xie, Researcher, VibeGuard Analysis.

Conclusion: The Engineering Discipline Gap

The Claude Code leak of 2026 is a stark reminder that AI companies are not immune to the laws of software engineering. Anthropic’s mistake was a failure of operational rigor. While their “agentic harness” showed remarkable maturity and ambitious plans for future autonomy through KAIROS, their release process lacked the basic guardrails required for software at this scale.

As we transition to autonomous agents, the discipline gap will only grow. Developers are moving faster than ever, delegating configuration to AI that prioritizes “shipping” over “security.” The future of Agentic Governance depends on building control planes that can detect these failures before they reach public registries. Until then, the blueprints for the future remain only as secure as the last line of an .npmignore file.