Introduction: The Blueprint on the Passenger Seat

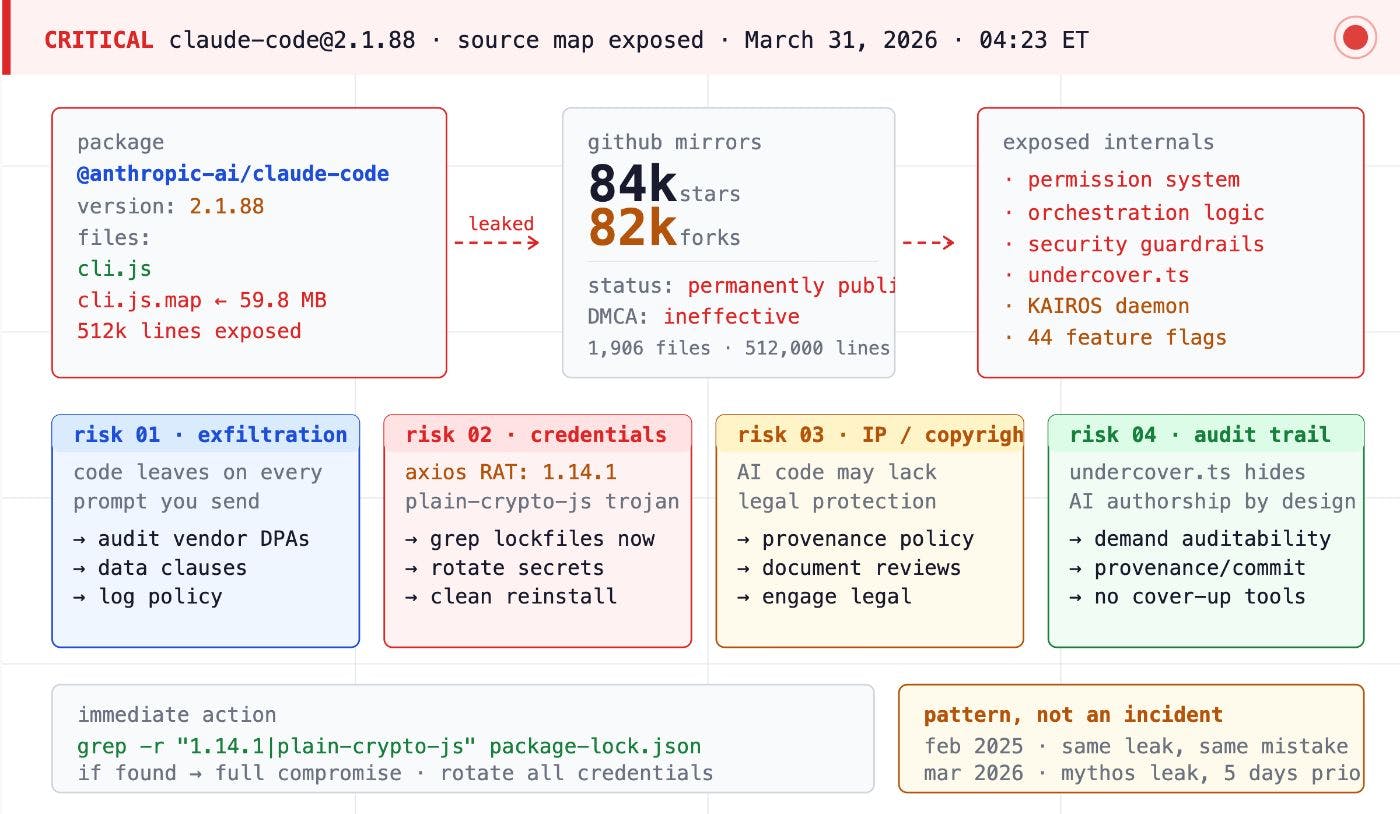

On March 31, 2026, the tech world witnessed a moment of staggering professional negligence. Security researcher Chaofan Shou discovered that the internal blueprints for Claude Code—Anthropic’s flagship agentic tool and a primary driver of its $2.5 billion ARR—were sitting in plain sight on the public npm registry. It was as if a master architect had left the detailed plans for a high-security vault sitting on the passenger seat of an unlocked car.

The leak, occurring via version 2.1.88 of @anthropic-ai/claude-code, was more than just a code dump; it was a psychological profile of an organization moving at breakneck speed. The central irony is almost too perfect: buried within the 60MB of leaked files was a secrecy subsystem called Undercover Mode. While this module was designed to scrub internal codenames and prevent accidental disclosures, the very process of shipping it was the vehicle for its exposure. As a lead software architect, looking at this repository feels like watching a high-stakes laboratory being run with the rigor of a 3:00 AM side project.

The Anatomy of a Packaging Error

The exposure was not the result of a sophisticated state-sponsored breach. It was a failure of basic engineering hygiene. The transition of Claude Code to the Bun runtime—which Anthropic acquired in late 2024—introduced a specific failure point in the build pipeline. This is the ultimate “own goal” for a company that now owns the tools it uses to build its products.

Technical Deep Dive: The Bun Bundler Bug

The technical root cause was Bun issue #28001. This bug causes the Bun bundler to generate and serve source maps even when the environment is explicitly set to development: false. While Anthropic engineers likely assumed their production flags would suppress these artifacts, the bundler appended a sourceMappingURL comment to the output regardless. This resulted in the cli.js.map file being created and, due to the absence of a *.map exclusion rule in the .npmignore file, being published directly to the public registry.

The damage of a leaked source map cannot be overstated. Because the sourcesContent field within the cli.js.map file was populated, it didn’t just provide hints; it reconstructed the original, unminified, and unobfuscated TypeScript source code for nearly 2,000 files. Any developer with the file could effectively hit “undo” on the entire compilation process. The fact that the npm pack output—which takes roughly 10 seconds to verify—was never audited before publishing is a damning indictment of the company’s release hygiene.

Roadmaps and Codenames: What Was Under the Hood

For the investigative journalist, the leak provided a raw, unvarnished look at Anthropic’s internal model trajectory. The codebase was littered with 44 feature flags and model identifiers that pull back the curtain on the next two years of AI development. Among the most notable discoveries were:

- Tengu: The internal project codename for Claude Code itself. Telemetry events throughout the codebase are prefixed with tengu_, revealing the tool’s foundational identity.

- Capybara: Strongly linked in internal comments to Mythos, a next-frontier model currently being tested with a staggering 1-million-token context window.

- Fennec: The internal designation for Opus 4.6, confirming the model’s existence and active integration into agentic workflows.

- Numbat: A model identifier currently appearing in internal testing loops.

- Opus 4.7 and Sonnet 4.8: These specific version strings were found within the Undercover Mode prompts, indicating that Anthropic is already planning for iterations well beyond current public releases.

The codebase also utilized “gemstone” codenames for feature-level flags, such as tengu_cobalt_frost for voice synthesis and tengu_amber_quartz as an emergency safety override for voice modalities. These reveal an architecture that is physically capable of much more than the public is currently permitted to see.

Feature Reveal: From KAIROS to ASCII Pets

The leak confirms that Anthropic is sitting on a reservoir of “agentic” capabilities that transform the AI from a chatbot into a persistent, autonomous worker.

KAIROS and ULTRAPLAN

The most significant revelation was KAIROS, an “always-on” daemon. Unlike the current interactive mode, KAIROS operates in the background, monitoring a developer’s environment and taking proactive actions—such as sending push notifications or subscribing to Pull Request events—without direct human invocation. This is the shift from “AI tool” to “AI colleague.”

Working alongside this is ULTRAPLAN, a feature that offloads complex architectural tasks to a remote Opus 4.6 session. This allows the system to engage in up to 30 minutes of “remote reasoning” before returning a consolidated plan to the local terminal, bypassing the token limits and latency of standard local execution.

The Multi-Agent Coordinator and Buddy

The architecture also supports Multi-Agent Coordinator Mode, which allows Claude Code to spawn parallel subagents, assign them unique scratchpads, and synthesize their results. This suggests that the future of the product is not a single model, but a swarm of specialized workers.

Yet, amidst this high-tech ambition, we find the Buddy system—a Tamagotchi-style pet companion. The system features 18 species (including “chonks” and dragons) with gacha-style rarity and a 1% “shiny” variant. The internal commentary on the pet system is remarkably casual compared to the security protocols:

“Mulberry32 — tiny seeded PRNG, good enough for picking ducks.”

This reveals a company culture where engineers were spending time perfecting a digital duck’s SNARK and CHAOS stats while neglecting the build configuration that would eventually leak those very ducks to the world. It was even discovered that engineers hex-encoded the word “duck” (64 75 63 6b) to bypass their own internal build scanners that flagged the term as a possible collision with a model codename.

Architecture and “Paranoid” Engineering

From a systems architect’s perspective, the code reveals a “paranoid” engineering culture obsessed with protecting intellectual property, even if they failed at the front door. The YOLO classifier, a fast ML-based permission system, stands out as a mechanism for deciding when to auto-approve tool calls without interrupting the user. This is gated behind TRANSCRIPT_CLASSIFIER and represents a massive step toward full autonomy.

Technical Deep Dive: The Memory Architecture

Claude Code uses a three-layer memory system. A permanent MEMORY.md file stores lightweight pointers to facts, while the facts themselves live in topic-specific files. A background agent called Dream periodically consolidates these files through four phases: Orient, Gather, Consolidate, and Prune. This ensures the system remains “thin” enough for the context window. As the comments suggest, the system is designed to be “thinking about you” while you sleep, consolidating the previous day’s work into permanent knowledge.

Other security measures found in the leak include Anti-distillation mode, which poisons API traffic with fake tool definitions to hinder competitors trying to train on Claude’s outputs. There is also a Client attestation system using cryptographic hashes from the Bun runtime to verify requests. Most interestingly, the engineers implemented frustration detection using regex patterns like wtf|ffs|shit to track user swearing as a telemetry metric, effectively measuring the emotional toll of their AI’s mistakes.

The “Unhinged” Codebase: Relatable Tech Debt

The 512,000 lines of code didn’t look like the pristine output of an AI safety lab. Instead, they revealed a codebase with “unhinged energy.” The main.tsx file is a monolithic 800KB nightmare of 5,000 lines. The repository contains over 460 eslint-disable comments, showing that when the “vibes” was good, the safety checks were the first thing to be discarded.

Perhaps most startling was the documentation of Undercover Mode as a tool for ghost-contributing. The system prompt explicitly tells the model: “You are operating UNDERCOVER… Your commit messages MUST NOT contain ANY Anthropic-internal information.” This infrastructure allows Anthropic to ship AI-written code into public repositories without attribution, raising profound questions about open-source transparency. Furthermore, the code is littered with 50+ functions like writeFileSyncAndFlush_DEPRECATED() that remain in production use. An engineer named Ollie even left comments admitting they weren’t sure if certain code blocks were necessary, a moment of profound relatability that humanizes a $380 billion giant but underscores the risks of “vibe coding” at scale.

The Second Wave: Weaponization and Supply Chain Risk

The vacuum created by the leak was immediately filled by threat actors. Within 24 hours, the incident was weaponized to distribute Vidar stealer and GhostSocks proxy malware. Attackers utilized the TradeAI.exe dropper, masquerading as “leaked” versions of Claude Code or “unlocked” enterprise tools.

These actors were sophisticated, utilizing a GPU hardware scoring system to target victims. High-end NVIDIA gaming PCs (RTX 30/40 series) were prioritized for infection, presumably for cryptocurrency mining or high-value credential theft, while virtual machines were rejected. This malware appeared under lures like WormGPT, NemoClaw, and KawaiiGPT. This was not an isolated incident but part of a 2026 supply chain crisis that saw concurrent compromises of axios and LiteLLM, proving that the modern developer’s terminal is the new front line of enterprise security.

Strategic Lessons: Moving the Perimeter

The Claude Code incident is the definitive case study for the VibeGuard taxonomy. This framework identifies the blind spots inherent in AI-assisted development, where AI-generated code produces 1.7x more issues than human-written code according to recent arXiv findings. The taxonomy highlights five failures: Artifact hygiene, Config drift, Hardcoded secrets, Supply chain risks, and Source-map exposure.

“This was a release packaging issue caused by human error, not a security breach.”

While Anthropic’s official statement attempts to downplay the incident, the reality is that the security perimeter must shift. Local “vibe coding” creates a black box on developer laptops that bypasses corporate firewalls. The only sustainable path forward for the enterprise is the adoption of Isolated Cloud Development Environments (CDEs). By moving the workspace to the infrastructure layer, a missing .npmignore becomes a configuration error in a sandbox rather than a multi-billion-dollar exposure of intellectual property.

Conclusion: The Engineering Discipline Gap

The ultimate lesson of the Claude Code leak is that even the world’s most advanced AI labs are susceptible to the most basic engineering failures. We are witnessing a widening gap between the velocity of AI-driven feature development and the stagnation of operational hygiene. “Vibe coding” allows us to build incredible things at 3:00 AM, but without a rigorous, automated perimeter, it also allows us to leak those things by 4:00 AM.

As AI agents move from writing snippets to managing entire build pipelines, the human-in-the-loop requirement for packaging and release configuration has never been more vital. Anthropic’s ducks may be “shiny” and “legendary,” but the leak of their entire agentic harness is a sobering reminder that in the age of autonomous code, the most dangerous vulnerability is still a tired human with a misconfigured ignore file.