Introduction: The Irony of “Undercover Mode”

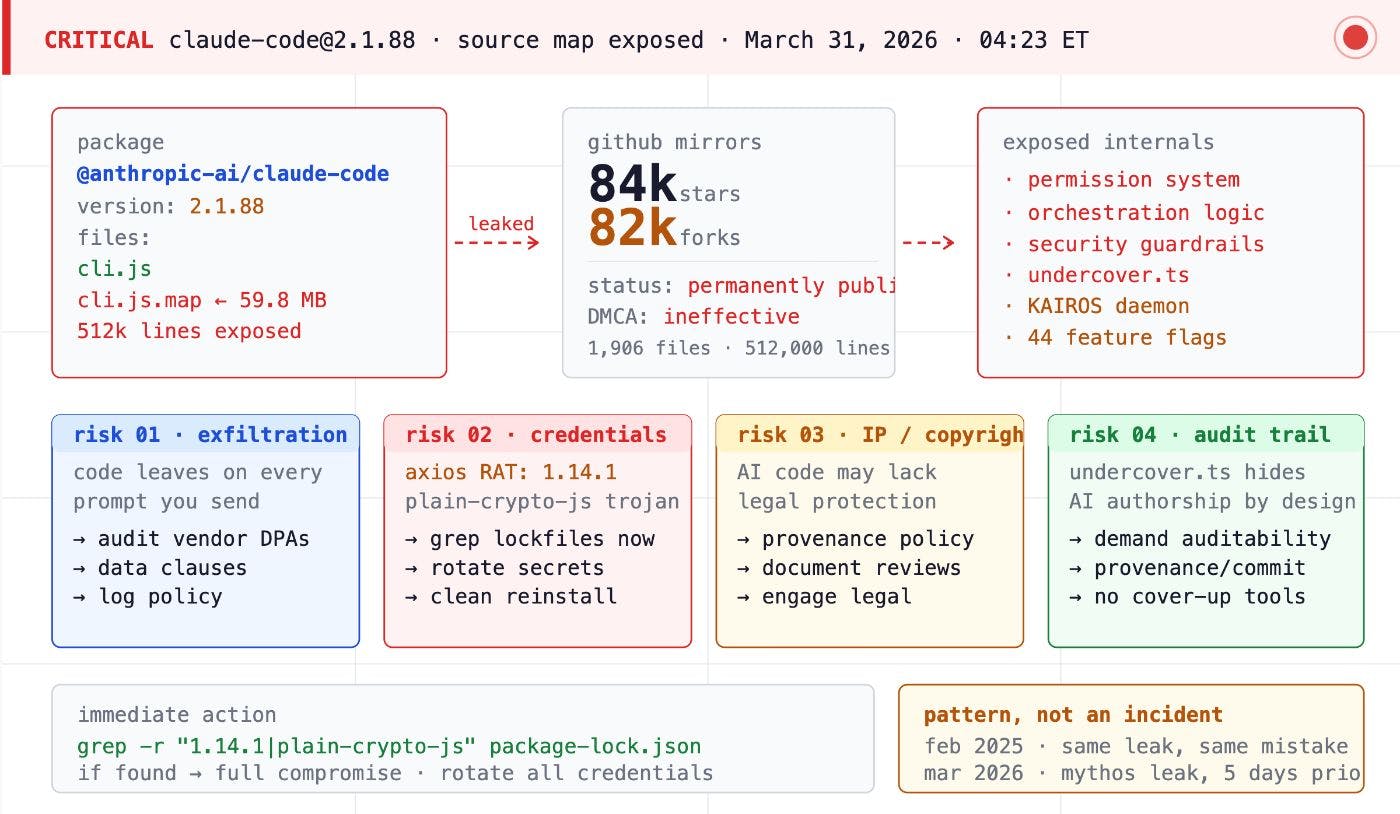

On March 31, 2026, the artificial intelligence industry was treated to a masterclass in operational irony. Anthropic, the multi-billion dollar AI lab that markets its tools as the ultimate guardians of engineering discipline, inadvertently pushed its most valuable proprietary code to the public npm registry. Security researcher Chaofan Shou discovered that version 2.1.88 of @anthropic-ai/claude-code was not merely a compiled binary; it was a transparent window into the company’s internal roadmap, technical debt, and secret “paranoid” engineering layers.

The most stinging irony found within the 512,000 lines of TypeScript was a subsystem called Undercover Mode. Defined in undercover.ts, this module was specifically designed to prevent the AI from accidentally leaking Anthropic secrets—such as internal model codenames like Capybara (the 1M context variant) or Fennec (Opus 4.6)—during public contributions. Anthropic had built a sophisticated safeguard to ensure their AI wouldn’t spill secrets, yet they effectively handed the keys to their kingdom to the public through a mundane packaging error.

This event serves as a watershed moment for the “vibe coding” era. It illustrates that while AI can help us build at lightning speed, it cannot yet replace the foundational discipline of software release hygiene. The company that sells the world’s most popular AI coding tool apparently skips the engineering discipline it preaches, proving that even in the age of Mythos and high-horizon reasoning, a single missing line in a configuration file remains a terminal vulnerability.

[The Claude Code Leak: A Forensic Analysis of Anthropic’s NPM Packaging Error](https://aiartimind.com/the-claude-code-leak-a-forensic-analysis-of-anthropics-npm-packaging-error/)The Anatomy of a Packaging Error: Bun, Source Maps, and Missing Safety Nets

The leak was not the result of a sophisticated zero-day or a breach of Anthropic’s internal servers. It was a failure of artifact hygiene so basic it could have been prevented with a ten-second manual review of the npm tarball.

The Bun Runtime Bug (Issue #28001)

In late 2024, Anthropic migrated Claude Code to the Bun runtime to prioritize execution speed. However, they inherited a known vulnerability in the Bun bundler. Since early 2026, Bun has suffered from Issue #28001, where setting development: false fails to suppress the generation of source maps in production builds. Despite the Bun team being aware of this, and the issue being visible on public trackers for weeks, Anthropic’s release pipeline blindly trusted the bundler to strip sensitive debugging data.

The Missing .npmignore

The second failure point was a configuration oversight. By default, the npm registry includes every file in a directory unless it is explicitly blacklisted. Anthropic failed to add *.map to their .npmignore file. Consequently, the 59.8 MB cli.js.map file was bundled into the public package. To make matters worse, this map file didn’t just contain references; it pointed toward a secondary ZIP archive hosted on an Anthropic-owned Cloudflare R2 storage bucket, providing a fallback path for mirrors to download the full source code even after the original npm package was pulled.

Technical Deep Dive: How Source Maps Exposed the Source

Source maps are designed to link minified JavaScript back to human-readable TypeScript for debugging. The cli.js.map file utilized the sourcesContent field. When this field is populated, the .map file literally contains the original, unminified source code as a string. By parsing this file, researchers were able to reconstruct the entire 1,900-file directory structure, exposing every internal constant, developer comment, and prompt engineering instruction used to guide Claude‘s agentic behavior.

Inside the “Unhinged” Codebase: Engineering at the Speed of AI

The leaked source code provided a rare, unvarnished look at how a “vibe-coded” product matures. Claude Code’s development lead has previously admitted that 100% of his contributions were written by Claude itself. The result is a codebase that community analysts have described as “absolutely unhinged”—a sprawling, 800KB-per-file monument to shipping fast and worrying about modularity later.

Massive Files and Technical Debt

Traditional software engineering encourages modularity, but Claude Code favors density. The main.tsx file is a massive 803,924 bytes, spanning 4,683 lines. Other core utilities, such as the printing handler and message orchestrator, exceed 5,500 lines each. The pressure to ship is evident in the technical debt: the code contains over 460 eslint-disable comments, suggesting that the AI (or its human supervisor) frequently bypassed linting and type-checking rules to force features into production.

Perhaps the most telling marker of this “vibe” is the naming convention for critical functions. The system responsible for saving sensitive authentication credentials to disk is named writeFileSyncAndFlush_DEPRECATED(). Despite the name, it remains actively called in over 50 places throughout the production stack. As one analyst noted, “deprecated is just a vibe at Anthropic apparently.”

The “Buddy” System and Hex-Encoded Ducks

In a surprising departure from the clinical tone of enterprise software, the leak revealed a fully functional Tamagotchi-style pet system called Buddy. Users can hatch one of 18 species (including axolotl, dragon, ghost, and chonk) based on a deterministic hash of their user ID. The system includes:

- Gacha Rarity: A 1% drop rate for “legendary” pets and “shiny” variants.

- RPG Stats: Metrics for DEBUGGING, PATIENCE, CHAOS, WISDOM, and SNARK.

- Cosmetics: Functional “hats” like wizard hats, crowns, and propeller beanies.

In a bizarre act of internal tool-dodging, the developers hex-encoded the word duck (appearing as 0x6475636b) to bypass their own internal security scanners, which had flagged the word as a collision with an internal model codename.

Developer Confessions in the Code

The internal comments provide a humorous, human glimpse into the chaotic development of high-stakes AI:

“TODO: figure out why” (Found inside the primary error handler).

“Mulberry32 — tiny seeded PRNG, good enough for picking ducks.”

“This fails an e2e test if the ?. is not present. This is likely a bug in the e2e test.”

“Not sure how this became a string… TODO: Fix upstream.”

An engineer named Ollie even left a comment in mcp/client.ts (line 589) admitting that certain logic might be entirely pointless, yet it remains in the production build.

Roadmap Spoilers: KAIROS, ULTRAPLAN, and the Three-Layer Memory

Beyond the humor, the leak provided a clear blueprint for Anthropic’s agentic future. The 44 feature flags found in the source reveal a move toward much higher levels of autonomy and persistence.

KAIROS and ULTRAPLAN

KAIROS is the most significant unreleased feature. Described as an “always-on” daemon mode, it operates as a background process that monitors the developer’s terminal context and intervenes proactively. It operates on a strict 15-second “blocking budget” and has access to exclusive tools like PushNotification and SubscribePR.

ULTRAPLAN represents a shift toward long-horizon reasoning. It allows Claude Code to offload complex tasks to a remote Opus 4.6 session that is granted a 30-minute “thinking time” budget to formulate a comprehensive plan before executing locally.

The Memory Architecture

The leak also detailed a sophisticated three-layer memory design:

1. The Index: A lightweight MEMORY.md file (~150 chars per line) that lives permanently in the model’s context, storing pointers to facts.

2. Topic Files: Detailed knowledge bases fetched on demand when the index is triggered.

3. The Dream System: A background autoDream subagent that consolidates memory in four phases: Orient, Gather, Consolidate, and Prune. This system is gated by three conditions: 24 hours since the last “dream,” at least 5 user sessions, and a consolidation lock.

The Paranoid Layer: Security and Anti-Distillation

The source code reveals that Anthropic is locked in a cold war with competitors and API abusers. They have implemented several “paranoid” engineering layers, overseen by specifically named individuals in the code: David Forsythe and Kyla Guru from the Safeguards team.

Anti-Distillation and Poisoning

The ANTI_DISTILLATION_CC flag triggers a proactive defense against competitors trying to train their own models using Claude’s outputs. The system can inject fake tool definitions and “poison” traffic being recorded for distillation purposes, effectively serving as a logical canary trap.

Client Attestation and Frustration Tracking

To prevent API abuse, the Bun runtime utilizes Client Attestation. Every request includes a billing header where the native runtime replaces a cch=00000 placeholder with a cryptographic hash. This ensures the request originates from a legitimate, unmodified Claude Code binary.

The system even tracks user sentiment through a Frustration Detection layer. Using regex patterns like wtf|ffs|shit, the tool monitors user swearing to measure model performance. It also tracks how often a user types continue to detect when the model is prematurely cutting off responses.

[Best AI Tools for Content Creators 2026: Build Your Complete Stack](https://aiartimind.com/best-ai-tools-content-creators-2026/)“Vibe Coding” and Local Customization: The “Riley Code” Experiment

The leak fueled the “vibe coding” movement, where developers prioritize intent over manual code review. Creator Riley Brown demonstrated this by using OpenAI Codex to fork and modify the leaked TypeScript in hours.

In an experiment dubbed Riley Code, Brown stripped Anthropic’s branding and replaced standard status verbs with popular internet slang. His version displayed terms like Status maxing, Mewing, and Aura farming while it worked. He even instructed the AI to adopt the persona of a “19-year-old smart intern” who responds only in lowercase.

This experiment highlights the inherent risks of the era; to achieve this speed, Brown set the tool to dangerously skip permissions by default. While entertaining for a YouTube demo, such a configuration would be a catastrophic liability in a corporate environment where an AI agent could delete an entire production database without a second thought.

[NotebookLM to Create YouTube Videos (and Earn From Them)](https://aiartimind.com/notebooklm-to-create-youtube-videos-and-earn-from-them/)Weaponized Attention: The Rise of Fake Leaks and Malware

Within 24 hours, the leak was weaponized by threat actors to distribute Vidar and GhostSocks malware. Analysis from Trend Micro revealed a rapid pivot from existing AI-themed campaigns to exploit the “Claude Code” brand.

The TradeAI.exe Dropper

Attackers used GitHub to host fraudulent repositories promising “unlocked” or “Enterprise” versions of the leaked code. The primary lure was a 7z archive (e.g., ClaudeCode_x64.7z) containing a Rust-compiled dropper known as TradeAI.exe. This binary used XOR-encrypted strings with a 12-byte rotating key (xnasff3wcedj) stored in the cryptify_keyd3d environment variable to hide its C2 infrastructure.

Anti-Analysis and Supply Chain Risk

The dropper featured sophisticated anti-analysis checks, including:

- VM Detection: Scanning for VBoxGuest.sys and vmhgfs.sys.

- Process Blacklisting: Terminating if it detected wireshark.exe or x64dbg.exe.

- GPU Scoring: Prioritizing gaming PCs (NVIDIA RTX) for potential credential theft or crypto-jacking.

This incident highlights the fragility of the modern supply chain. When developers scramble to access a leak, they often bypass security protocols, leading to immediate infection by Vidar (which steals browser credentials and crypto wallets) and GhostSocks (which turns the victim’s machine into a residential proxy).

Conclusion: Lessons for the Modern Enterprise

The Claude Code leak was not a failure of AI logic, but a failure of artifact hygiene. It proves that even when AI builds the software, humans must still guard the gate. For enterprise leaders, the lesson is that security doesn’t just live in the code; it lives in the configuration.

As teams move toward delegating build and release tasks to agents, the need for tools like VibeGuard becomes non-negotiable. VibeGuard acts as a pre-publish security gate, scanning for source map exposure, hardcoded secrets, and packaging-configuration drift. Organizations must treat their development environments as governed infrastructure, using isolated workspaces and network boundaries to contain the blast radius of a compromised dependency.

Ultimately, Anthropic’s mistake was mundane, but its consequences were profound. It reminded the world that in the age of autonomous agents, a single missing line in .npmignore can still compromise the most advanced technology on the planet.

[Arcads: Why AI-Generated UGC is the Secret Weapon for Winning Ad Campaigns in 2026](https://aiartimind.com/arcads-ai-generated-ugc-secret-weapon-2026/)